In the finale of our blog series, “Why Mechanical Engineering is Crucial to Data Centers”, we’ll take a look at energy efficiency, airflow management and containment strategies in data centers. Find Parts 1, 2, and 3 here:

Airflow Management

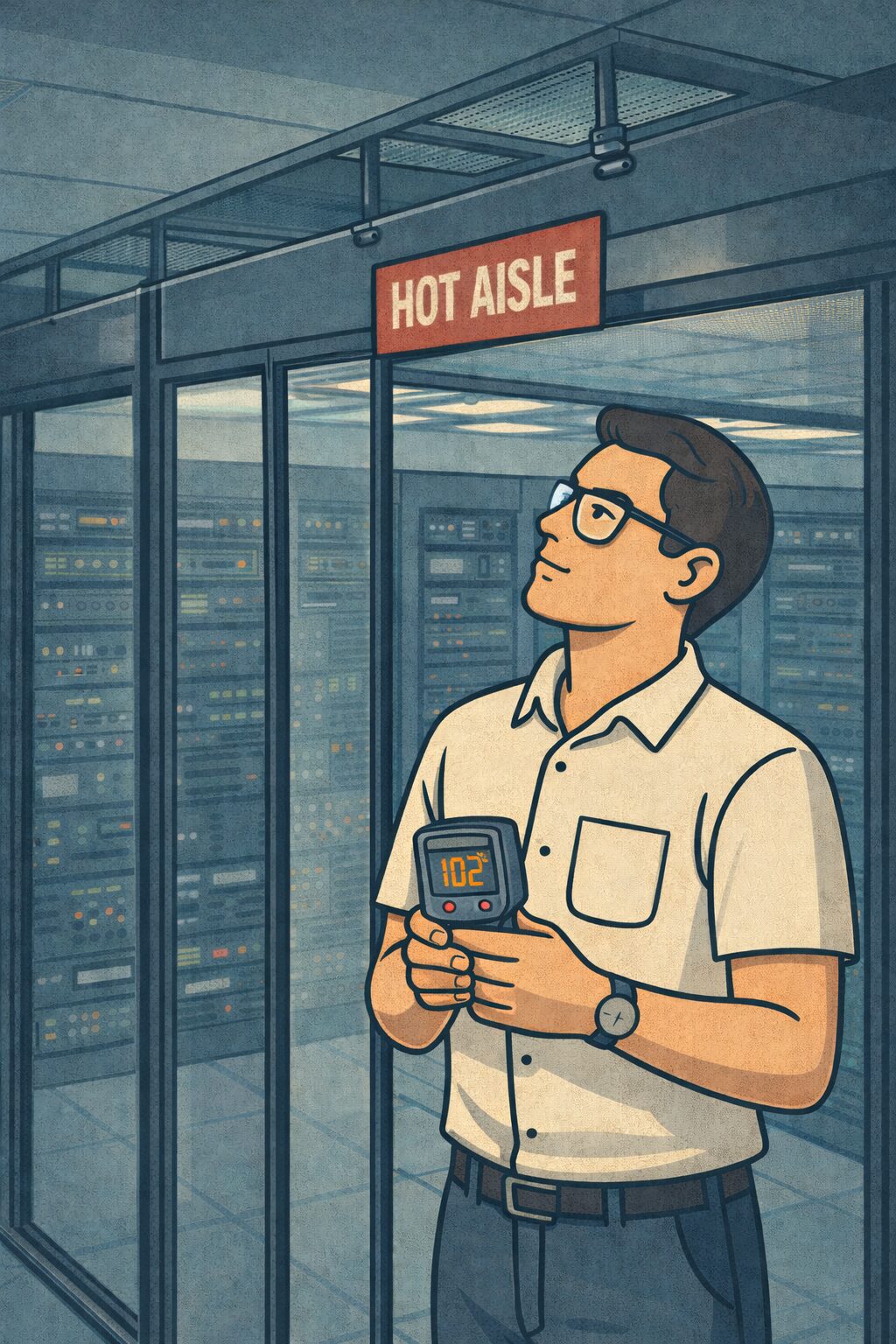

Airflow Management is critical in data centers. To put it simply, we want the cold air to efficiently cool the IT equipment, and the hot air to be efficiently removed, with minimal mixing. There are many strategies that are used, within the racks themselves (such as blanking panels, both vertical and horizontal), perforated floor tiles to direct air where its needed, as well as aisle containment, primarily either hot aisle or cold aisle containment.

Containment

Good containment strategies reduce the mixing of cool supply air and hot exhaust air. Improving the separation of these air streams can lead to significant reductions in mechanical cooling and fan operation, leading to operational cost savings. Additionally, more efficient cooling performance can reduce the likelihood of ‘hot spots’ within the IT space. High supply air temperatures may lead to equipment operational issues and/or failures.

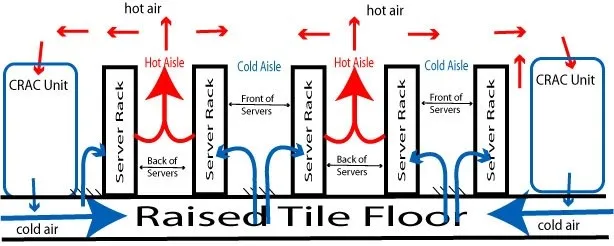

Historically, one of the first air flow efficiency remediations for IT rack cooling was implementation of the hot-aisle, cold-aisle row configuration. This idea originated in the early 1990’s, a time when the average rack heat load was generally less than 2 kW. In this scenario, the fronts of racks in a row share one aisle (which are supplied with cool air, aka the ‘Cold Aisle’) and the rears of the racks shared the next aisle (the ‘Hot Aisle’). See the diagram below.

This was a game-changer in its time. Per the Energystar.gov, this configuration change generates cooling savings between 10 to 35 percent.

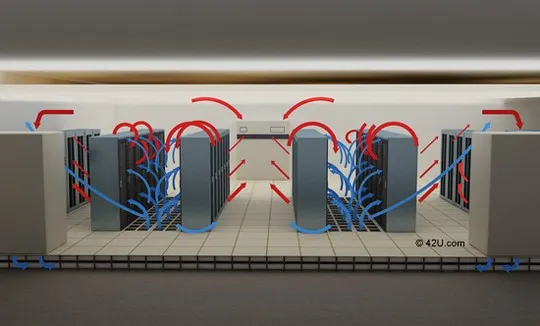

As rack heat loads increased over the years, the Hot Aisle/Cold Aisle solution alone was no longer as effective. Higher heat led to higher fan speeds and more air circulation. Balancing the supply and return/exhaust air flows became difficult. Cool air supplies were, at times, depleted before they reached all the equipment within a rack. This led to even higher fan speeds and eventually the intermingling of supply and exhaust air streams. Hot spots developed within the cold aisles, most evident near the tops of racks and at the ends of the aisles.

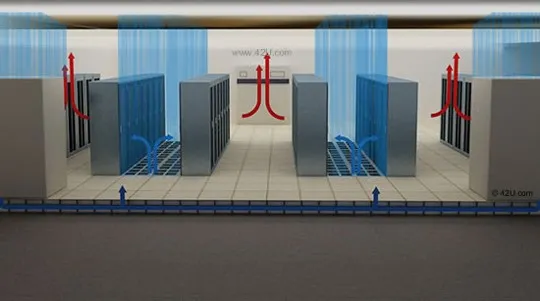

To combat this situation, engineers started developing additional means of segregating the air streams. Containment barriers were the most obvious solutions. Today, the most common ways air is contained and managed is using either cold-aisle containment or hot-aisle containment:

- Cold-aisle Containment (CAC) – enclose the cold aisle

- Hot-aisle containment (HAC) – enclose the hot aisle

Deploying these strategies has several common requirements:

- The data center needs to be designed with a hot-aisle/cold-aisle configuration

- The racks should be outfitted with blanking panels and other blocking components to limit the mixing of the cold and hot air through/within the rack

- Gaps between racks need to be minimal or blocked with containment materials

- Fire suppression systems must be analyzed to verify local code requirements and if the containment materials will be allowable (as they are installed to the ceiling and may be adjacent to sprinkler heads)

- Ends of aisles should be contained

There are a variety of containment options. The least expensive option is installing flexible strip curtains, similar to those seen in grocery store meat lockers. While inexpensive, they are harder to seal, especially over time. They are more difficult to manipulate than doors for access to the contained aisle. They also are harder to clean and can turn yellow and/or brittle from UV exposure.

Many containment systems are composed of framed clear plastic panels. The aisle ends can be sliding or swinging door systems, made from matching materials. These cost significantly more but have many advantages over strip containment.

The most efficient overall design is full containment, i.e. from the floor to the ceiling. It can be problematic depending on the fire suppression system installed and local code requirements.

CAC can also be relatively effective in a partial implementation, installing containment from the tops of the racks to the floor. The cold air is heavier and stays near the floor, creating a ‘bathtub’ effect. This design works best with rack heat loads below 6 kW. An advantage of this system (in addition to lower cost) is that it generally does not impact the effectiveness of the existing fire suppression systems.

One way to more effectively design a new containment and airflow management system is to run a CFD analysis. CFD, or Computational Fluid Dynamics, models the airflow dynamics in the data center, allowing the engineer to determine how to best position racks, supply and returns, and containment.

Energy Efficiency

While the headlines about data center energy and water usage paint a scary scenario for many consumers, data centers can be some of the most efficient facilities around. In addition to containment solutions, engineers are creative about other airflow and operational considerations.

In traditional raised floor data centers, airflow efficiencies can be improved by selecting the correct number of perforated tiles and correctly positioning them based on heat loads existing in the facility. It is also critical to keep the area under a raised floor as clear as possible. Often, during site assessments, our mechanical engineers will find multiple obstructions under a raised floor that are blocking airflow. This can cause the CRAC units to work harder and potentially create hot spots in the IT space.

In larger data centers, containment is very common. Many of the cloud data centers utilize evaporative coolers which are air handlers that can utilize water to further reduce supply temperatures. These designs typically are deployed with hot aisle containment. For the majority of the year, the facility will use outside air alone. Only when outdoor temperatures get very high will water-use commence.

In AI deployments, liquid cooling, which is even more efficient at removing heat, is used in combination with air cooling to cool networking, storage, and other IT equipment. These designs will continue to reduce the total amount of energy needed to cool data centers.

PUE and WUE

As data centers continue to grow in size and energy demand, efficiency metrics like PUE and WUE have become essential tools for owners, operators, and engineers.

Power Usage Effectiveness (PUE) measures how efficiently a data center uses electricity. It compares the total facility power use to the power used by IT equipment only.

PUE = Total Facility Power ÷ IT Equipment Power

A perfect (and currently unachievable) PUE is 1.0, meaning all power goes directly to IT loads. However, IT loads still require cooling which requires additional power. Over the past two decades the data center industry has made tremendous improvements in both cooling technologies and computer system’s tolerance of higher operating temperatures. Around 2005, a data center with a PUE of 2.0 (i.e. the facility power requirements equals the IT load) was considered good. In 2026, a PUE of 2.0 is considered very poor. Today, most modern facilities fall somewhere between 1.2 and 1.5. Many hyperscale facilities are even better, with a PUE between 1.09 to 1.2.

Water Usage Effectiveness (WUE) is a metric for data center water efficiency. It weighs facility water efficiency based on how little water is used to cool a unit of IT energy.

WUE = Annual Water Usage ÷ IT Equipment Energy

WUE is typically expressed in liters or gallons per kilowatt-hour (kWh). A lower WUE indicates more efficient cooling design, water management, the use of alternative cooling options or environmental control strategies that reduce the reliance on water.

From a mechanical engineering perspective, PUE and WUE are directly impacted by HVAC system selection (note that not all systems utilize water), cooling plant efficiency, controls strategies, and climate conditions. From the methods mentioned in these blog posts the data center can be efficient. Optimizing these systems not only reduces operating costs but also supports sustainability goals that allow long-term operational reliability.

Understanding and tracking both metrics allows data center teams to make informed decisions, balancing performance, cost, and environmental responsibility.

Closing

We hope you found our mechanical engineering blog series useful!

That’s it for the series at this time, but if there is a topic you would like to see us cover in the future, please let us know!